Practical AI adoption, not PowerPoint

We help executive teams turn AI ambition into real, responsible results. From leadership clarity to adoption plans, everything we do is built for delivery, not theatre.

[ OUR MISSION ]

Help leaders adopt AI with clarity and guardrails

AI is moving faster than most organisations can process. What’s missing isn’t access, it’s alignment.

We help organisations shift from AI experimentation to sustainable enablement. That means aligning leadership, setting responsible guardrails, and embedding adoption into day to day decision making.

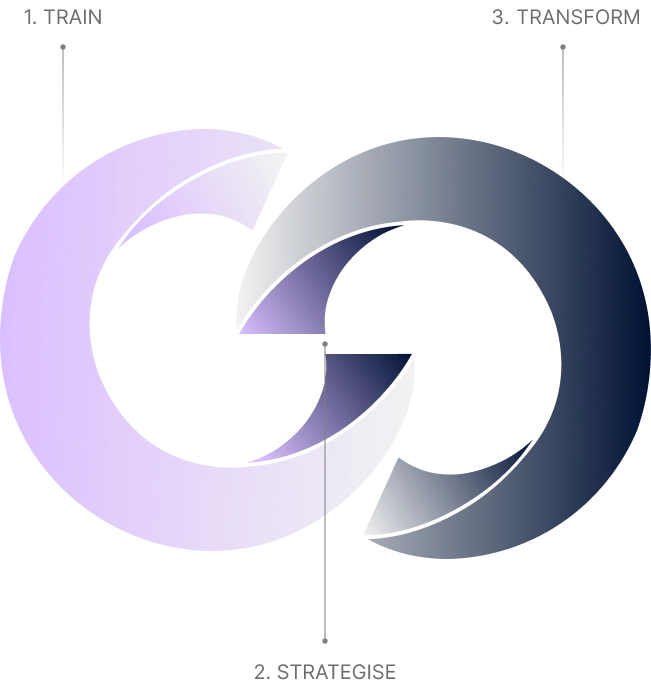

[ OUR APPROACH ]

We guide leadership teams through a structured path:

Build shared understanding across functions

Set strategic priorities that reflect your risk posture

Transform with governance ready practices that scale

Build shared understanding across functions

Set strategic priorities that reflect your risk posture

Transform with governance ready practices that scale

It’s fast, focused, and designed to leave your teams with clear decisions and confidence to move.

Who we work with

We work with mid sized to large enterprises big enough to unlock AI’s full potential, lean enough to move decisively.

Whether you're a CIO or a Commercial lead exploring use cases, we tailor programmes to meet you where you are.

We work across multiple industries such as FMCG, Healthcare, Services industry and Private equity.

We work with mid sized to large enterprises big enough to unlock AI’s full potential, lean enough to move decisively.

Whether you're a CIO or a Commercial lead exploring use cases, we tailor programmes to meet you where you are.

We work across multiple industries such as FMCG, Healthcare, Services industry and Private equity.

Meet the team

Click to flip

Outcomes we’ve supported

All examples anonymised for confidentiality.

Helped a C-suite align on AI priorities and risks in under two hours.

Supported a portfolio ops team in defining a scalable adoption playbook.

Designed a sector specific AI enablement programme for client facing teams.

Advised on data boundary and usage frameworks ahead of a major AI rollout.

Ethics and responsibility

Responsible use isn’t a compliance add on; it’s the foundation for scalable AI.

We never demo with client data.

We never experiment without consent.

We help your teams define safe boundaries then build capability within them.

Responsible use isn’t a compliance add on; it’s the foundation for scalable AI.

We never demo with client data.

We never experiment without consent.

We help your teams define safe boundaries then build capability within them.

Let’s talk about your AI goals

Whether you’re starting from scratch or scaling fast, we can help you move from AI interest to confident adoption.